Ultra-Low Power Devices for Robot Collaboration and Quantum Encryption

An ultra-low power hybrid chip developed by researchers from the Georgia Institute of Technology and inspired by the brain could help give palm-sized robots the ability to collaborate and learn from their experiences. Combined with new generations of low-power motors and sensors, the new application-specific integrated circuit (ASIC) - which operates on milliwatts of power - could help intelligent swarm robots operate for hours instead of minutes.

To conserve power, the chips use a hybrid digital-analog time-domain processor in which the pulse-width of signals encodes information. The neural network IC accommodates both model-based programming and collaborative reinforcement learning, potentially providing the small robots larger capabilities for reconnaissance, search-and-rescue and other missions.

In separate development, MIT researchers have announced a novel cryptography circuit that can be used to protect low-power "internet of things" (IoT) devices in the coming age of quantum computing.

Quantum computers can in principle execute calculations that today are practically impossible for classical computers. Bringing quantum computers online and to market could one day enable advances in medical research, drug discovery, advanced IoT devices, robot swarms, and other applications. But there's a catch: If hackers also have access to quantum computers, they could potentially break through the powerful encryption schemes that currently protect data exchanged between devices.

These developments in robotics and quantum encryption were both revealed at the recently-held IEEE International Solid-State Circuits Conference (ISSCC).

Brain-Inspired Robot Swarms

Researchers from the Georgia Institute of Technology demonstrated robotic cars driven by the unique ASICs at the 2019 ISSCC. The research was sponsored by the Defense Advanced Research Projects Agency (DARPA) and the Semiconductor Research Corporation (SRC) through the Center for Brain-inspired Computing Enabling Autonomous Intelligence (CBRIC).

"We are trying to bring intelligence to these very small robots so they can learn about their environment and move around autonomously, without infrastructure," said Arijit Raychowdhury, associate professor in Georgia Tech's School of Electrical and Computer Engineering. "To accomplish that, we want to bring low-power circuit concepts to these very small devices so they can make decisions on their own. There is a huge demand for very small, but capable robots that do not require infrastructure."

The cars demonstrated by Raychowdhury and graduate students Ningyuan Cao, Muya Chang and Anupam Golder navigate through an arena floored by rubber pads and surrounded by cardboard block walls. As they search for a target, the robots must avoid traffic cones and each other, learning from the environment as they go and continuously communicating with each other.

The cars use inertial and ultrasound sensors to determine their location and detect objects around them. Information from the sensors goes to the hybrid ASIC, which serves as the "brain" of the vehicles. Instructions then go to a Raspberry Pi controller, which sends instructions to the electric motors.

In palm-sized robots, three major systems consume power: the motors and controllers used to drive and steer the wheels, the processor, and the sensing system. In the cars built by Raychowdhury's team, the low-power ASIC means that the motors consume the bulk of the power. "We have been able to push the compute power down to a level where the budget is dominated by the needs of the motors," he said.

The team is working with collaborators on motors that use micro-electromechanical (MEMS) technology able to operate with much less power than conventional motors.

"We would want to build a system in which sensing power, communications and computer power, and actuation are at about the same level, on the order of hundreds of milliwatts," said Raychowdhury, who is the ON Semiconductor Associate Professor in the School of Electrical and Computer Engineering. "If we can build these palm-sized robots with efficient motors and controllers, we should be able to provide runtimes of several hours on a couple of AA batteries. We now have a good idea what kind of computing platforms we need to deliver this, but we still need the other components to catch up."

In time domain computing, information is carried on two different voltages, encoded in the width of the pulses. That gives the circuits the energy-efficiency advantages of analog circuits with the robustness of digital devices.

"The size of the chip is reduced by half, and the power consumption is one-third what a traditional digital chip would need," said Raychowdhury. "We used several techniques in both logic and memory designs for reducing power consumption to the milliwatt range while meeting target performance."

With each pulse-width representing a different value, the system is slower than digital or analog devices, but Raychowdhury says the speed is sufficient for the small robots.

"For these control systems, we don't need circuits that operate at multiple gigahertz because the devices aren't moving that quickly," he said. "We are sacrificing a little performance to get extreme power efficiencies. Even if the compute operates at 10 or 100 megahertz, that will be enough for our target applications."

The 65-nanometer CMOS chips accommodate both kinds of learning appropriate for a robot. The system can be programmed to follow model-based algorithms, and it can learn from its environment using a reinforcement system that encourages better and better performance over time - much like a child who learns to walk by bumping into things.

"You start the system out with a predetermined set of weights in the neural network so the robot can start from a good place and not crash immediately or give erroneous information," Raychowdhury said. "When you deploy it in a new location, the environment will have some structures that it will recognize and some that the system will have to learn. The system will then make decisions on its own, and it will gauge the effectiveness of each decision to optimize its motion."

Communication between Robots Enables Collaboration

Communication between the robots allow them to collaborate to seek a target.

"In a collaborative environment, the robot not only needs to understand what it is doing, but also what others in the same group are doing," he said. "They will be working to maximize the total reward of the group as opposed to the reward of the individual."

With their ISSCC demonstration providing a proof-of-concept, the team is continuing to optimize designs and is working on a system-on-chip to integrate the computation and control circuitry.

"We want to enable more and more functionality in these small robots," Raychowdhury added. "We have shown what is possible, and what we have done will now need to be augmented by other innovations."

This project was supported by the Semiconductor Research Corporation under grant JUMP CBRIC task ID 2777.006. CITATION: Ningyuan Cao, Muya Chang, Arijit Raychowdhury, "A 65 nm 1.1-to-9.1 TOPS/W Hybrid-Digital-Mixed-Signal Computing Platform for Accelerating Model-Based and Model Free Swarm Robotics." (2019 IEEE International Solid-State Circuits Conference).

Advances in Quantum Encryption

Today's most promising quantum-resistant encryption scheme is called "lattice-based cryptography," which hides information in extremely complicated mathematical structures. To date, no known quantum algorithm can break through its defenses. But these schemes are way too computationally intense for IoT devices, which can only spare enough energy for simple data processing.

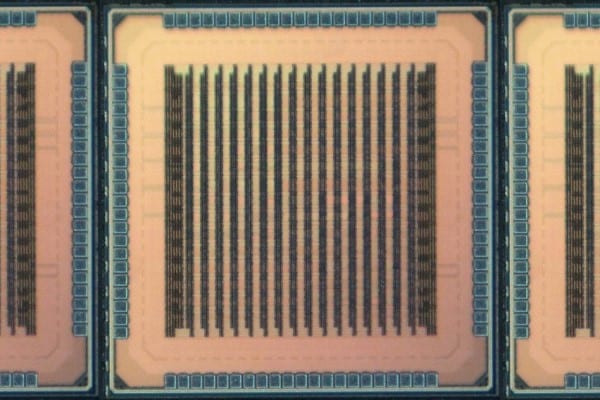

Also presented in a paper at the recent ISSCC, MIT researchers describe a novel circuit architecture and statistical optimization tricks that can be used to efficiently compute lattice-based cryptography. The 2-millimeter-squared chips the team developed are efficient enough for integration into any current IoT device.

MIT researchers have developed a novel chip that can compute complex quantum-proof encryption schemes efficiently enough to protect low-power "internet of things" (IoT) devices. (Image courtesy of the researchers.)

MIT researchers have developed a novel chip that can compute complex quantum-proof encryption schemes efficiently enough to protect low-power "internet of things" (IoT) devices. (Image courtesy of the researchers.)

The architecture is customizable to accommodate the multiple lattice-based schemes currently being studied in preparation for the day that quantum computers come online. "That might be a few decades from now, but figuring out if these techniques are really secure takes a long time," says first author Utsav Banerjee, a graduate student in electrical engineering and computer science. "It may seem early, but earlier is always better."

Moreover, the researchers say, the circuit is the first of its kind to meet standards for lattice-based cryptography set by the National Institute of Standards and Technology (NIST), an agency of the U.S. Department of Commerce that finds and writes regulations for today's encryption schemes.

Joining Banerjee on the paper are Anantha Chandrakasan, dean of MIT's School of Engineering and the Vannevar Bush Professor of Electrical Engineering and Computer Science, and Abhishek Pathak of the Indian Institute of Technology.

Efficient sampling

In the mid-1990s, MIT Professor Peter Shor developed a quantum algorithm that can essentially break through all modern cryptography schemes. Since then, NIST has been trying to find the most secure postquantum encryption schemes. This happens in phases; each phase winnows down a list of the most secure and practical schemes. Two weeks ago, the agency entered its second phase for postquantum cryptography, with lattice-based schemes making up half of its list.

In the new study, the researchers first implemented on commercial microprocessors several NIST lattice-based cryptography schemes from the agency's first phase. This revealed two bottlenecks for efficiency and performance: generating random numbers and data storage.

Generating random numbers is the most important part of all cryptography schemes, because those numbers are used to generate secure encryption keys that can't be predicted. That's calculated through a two-part process called "sampling."

Sampling first generates pseudorandom numbers from a known, finite set of values that have an equal probability of being selected. Then, a "postprocessing" step converts those pseudorandom numbers into a different probability distribution with a specified standard deviation — a limit for how much the values can vary from one another — that randomizes the numbers further. Basically, the random numbers must satisfy carefully chosen statistical parameters. This difficult mathematical problem consumes about 80 percent of all computation energy needed for lattice-based cryptography.

After analyzing all available methods for sampling, the researchers found that one method, called SHA-3, can generate many pseudorandom numbers two or three times more efficiently than all others. They tweaked SHA-3 to handle lattice-based cryptography sampling. On top of this, they applied some mathematical tricks to make pseudorandom sampling, and the postprocessing conversion to new distributions, faster and more efficient.

They run this technique using energy-efficient custom hardware that takes up only 9 percent of the surface area of their chip. In the end, this makes the process of sampling two orders of magnitude more efficient than traditional methods.

Splitting the data

On the hardware side, the researchers made innovations in data flow. Lattice-based cryptography processes data in vectors, which are tables of a few hundred or thousand numbers. Storing and moving those data requires physical memory components that take up around 80 percent of the hardware area of a circuit.

Traditionally, the data are stored on a single two-or four-port random access memory (RAM) device. Multiport devices enable the high data throughput required for encryption schemes, but they take up a lot of space.

For their circuit design, the researchers modified a technique called "number theoretic transform" (NTT), which functions similarly to the Fourier transform mathematical technique that decomposes a signal into the multiple frequencies that make it up. The modified NTT splits vector data and allocates portions across four single-port RAM devices. Each vector can still be accessed in its entirety for sampling as if it were stored on a single multiport device. The benefit is the four single-port REM devices occupy about a third less total area than one multiport device.

"We basically modified how the vector is physically mapped in the memory and modified the data flow, so this new mapping can be incorporated into the sampling process. Using these architecture tricks, we reduced the energy consumption and occupied area, while maintaining the desired throughput," Banerjee says.

The circuit also incorporates a small instruction memory component that can be programmed with custom instructions to handle different sampling techniques — such as specific probability distributions and standard deviations — and different vector sizes and operations. This is especially helpful, as lattice-based cryptography schemes will most likely change slightly in the coming years and decades.

Adjustable parameters can also be used to optimize efficiency and security. The more complex the computation, the lower the efficiency, and vice versa. In their paper, the researchers detail how to navigate these tradeoffs with their adjustable parameters. Next, the researchers plan to tweak the chip to run all the lattice-based cryptography schemes listed in NIST's second phase.

The work was supported by Texas Instruments and the TSMC University Shuttle Program.

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin