Intel Corporation has acquired Habana Labs, an Israel-based developer of programmable deep learning accelerators for the data center for approximately $2 billion. The combination strengthens Intel's artificial intelligence (AI) portfolio and accelerates its efforts in the nascent, fast-growing AI silicon market, which Intel expects to be greater than $25 billion by 20241.

"This acquisition advances our AI strategy, which is to provide customers with solutions to fit every performance need - from the intelligent edge to the data center," said Navin Shenoy, executive vice president and general manager of the Data Platforms Group at Intel. "More specifically, Habana turbo-charges our AI offerings for the data center with a high-performance training processor family and a standards-based programming environment to address evolving AI workloads."

Intel's AI strategy is grounded in the belief that harnessing the power of AI to improve business outcomes requires a broad mix of technology - hardware and software - and full ecosystem support. Today, Intel AI solutions are helping customers turn data into business value and driving meaningful revenue for the company. In 2019, Intel expects to generate over $3.5 billion in AI-driven revenue, up more than 20 percent year-over-year. Together, Intel and Habana can accelerate the delivery of best-in-class AI products for the data center, addressing customers' evolving needs.

Shenoy continued: "We know that customers are looking for ease of programmability with purpose-built AI solutions, as well as superior, scalable performance on a wide variety of workloads and neural network topologies. That's why we're thrilled to have an AI team of Habana's caliber with a proven track record of execution joining Intel. Our combined IP and expertise will deliver unmatched computing performance and efficiency for AI workloads in the data center."

You may also like: Lattice Announces New Low Power FPGA Platform

Habana will remain an independent business unit and will continue to be led by its current management team. Habana will report to Intel's Data Platforms Group, home to Intel's broad portfolio of data center class AI technologies. This combination gives Habana access to Intel AI capabilities, including significant resources built over the last three years with deep expertise in AI software, algorithms and research that will help Habana scale and accelerate.

Habana chairman Avigdor Willenz has agreed to serve as a senior adviser to the business unit as well as to Intel. Habana will continue to be based in Israel where Intel also has a significant presence and long history of investment. Prior to this transaction, Intel Capital was an investor in Habana.

"We have been fortunate to get to know and collaborate with Intel given its investment in Habana, and we're thrilled to be officially joining the team," said David Dahan, CEO of Habana. "Intel has created a world-class AI team and capability. We are excited to partner with Intel to accelerate and scale our business. Together, we will deliver our customers more AI innovation, faster.

Going forward, Intel plans to take full advantage of its growing portfolio of AI technology and talent to deliver customers unmatched computing performance and efficiency for AI workloads.

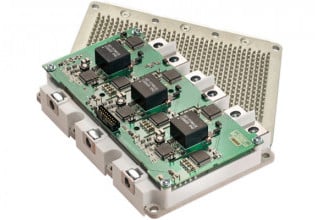

Habana's AI Training and Inference Products

Habana's Gaudi AI Training Processor is currently sampling with select hyperscale customers. Large-node training systems based on Gaudi are expected to deliver up to a 4x increase in throughput versus systems built with the equivalent number of GPUs. Gaudi is designed for efficient and flexible system scale-up and scale-out.

Additionally, Habana's Goya AI Inference Processor, which is commercially available, has demonstrated excellent inference performance including throughput and real-time latency in a highly competitive power envelope. Gaudi for training and Goya for inference offer a rich, easy-to-program development environment to help customers deploy and differentiate their solutions as AI workloads continue to evolve with growing demands on compute, memory and connectivity.