Ambarella Doubles Down on AI in Autonomous Driving Software Stack & SoC

Ambarella has announced that it has co-developed a new neural network processing stack and chip family for AI computing in autonomous vehicles.

When we interviewed edge AI semiconductor company Ambarella last year, the company was rethinking 4D automotive imaging radar with a centralized approach. Now, Ambarella has announced yet another way it intends to bring more intelligence into the automotive environment—this time, through an AI-focused software stack and underlying SoC (system-on-a-chip).

Ambarella's R&D vehicle fleet includes mono and stereo cameras, the company's Oculii 4D imaging radar, and the CV3-AD SoC for processing

To learn more about the news firsthand, we spoke with several members of Ambarella's team: Senya Pertsel, Sr. Director of Marketing for Automotive; Lazaar Louis, Director of Automotive Marketing; and Chris Day, VP of Marketing.

A Modular Autonomous Driving Software Stack

Ambarella's new software stack is a deep learning AI-based system that integrates neural network processing for perception, sensor fusion, and path planning to create a scalable and efficient solution for autonomous driving applications.

Notably, the new software stack transitioned to a neural network-based architecture from traditional algorithmic approaches. The software stack employs various neural networks for different aspects of autonomous driving, such as object detection and classification. This specialization ensures that each aspect of driving is handled by an optimized algorithm, leading to increased overall efficiency and accuracy. The stack also supports a number of OEM-specific sensing suites, including the option to add LiDAR.

Perception on the Ambarella software stack

The stack's sensor suite, which includes radars, monoculars, and stereo cameras, plays a crucial role in environmental perception. This integration, based on sensor fusion, combines data from diverse sources to create a comprehensive view of the vehicle's surroundings. One benefit of this approach is that it removes the need for high-definition maps for navigation.

“We do not require an HD map anymore,” Pertsel said. “We just use a regular semantic map and can generate a high-definition map on the fly from perception and the standard map.”

According to Pertsel, the stack can handle most of the heavy computational tasks through deep learning, significantly reducing the load on the CPU since the stack runs the majority of the deep learning processor on Amabrella’s CV3-AD SoC.

“Most of the heavy lifting is done by deep learning on the SoC, meaning the load on the CPU is very low,” he explained.

This optimization is crucial for real-time processing in autonomous vehicles, where delay or lag in decision-making can have serious consequences.

The CV3-AD SoC for AI Processing

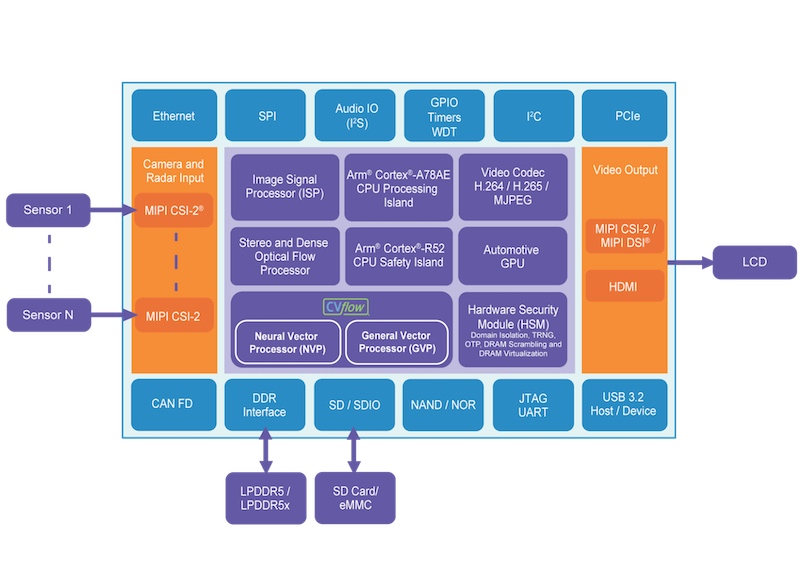

At the heart of the new software stack is Amabrella’s new CV3-AD SoC. Primarily serving as a central domain controller for AI computing in autonomous driving and ADAS, this SoC integrates a suite of advanced technologies while meeting ASIL-B requirements.

The CV3-AD design builds on a dedicated engine for stereo and dense optical processing, crucial for achieving accurate depth perception and understanding the movement of objects. The chip's architecture includes neural vector processors, capable of supporting both eight-bit and four-bit AI performance, thus significantly boosting its AI processing capabilities.

Block diagram of the CV3-AD

Further enhancing its versatility, the CV3-AD incorporates general vector processors for traditional computer vision and radar signal processing. These are complemented by up to twelve Cortex A78 AE Arm cores, specifically designed with automotive applications in mind. These cores offer robust processing power while maintaining low power consumption, a critical feature for electric vehicles. Because Ambarella developed the AD stack in conjunction with the CV3-AD AI domain controller SoC family, the stack runs smoothly on the SoC’s CVflow AI engines, bringing down the power consumption and processing load for the chip's Arm cores.

According to the company, the CV3-AD offers up to 5.7x performance in FPS, 4.3x power efficiency (FPS/watt), and 60% fewer external DRAM transfers compared to competitive GPU-based systems.

"By using a combination of Arm cores and domain-specific compute engines, we are able to achieve industry-leading power efficiency with this product line," Louis remarked. “With all the improvements we brought to the CV3-AD, we are looking at a 42x performance boost over our CV2 family.”

Ambarella Takes a Holistic View of Autonomous Tech

With better-performing hardware and new paradigms in software, Ambarella believes it can help push autonomous vehicles closer to reality.

"We believe that in order to design efficient hardware, you have to understand the full stack," Pertsel said. "That's why we get more efficiency and more AI performance per watt from our chips than our competitors. With that, we believe we can bring this technology to wide adoption on the mass market.”

All images used courtesy of Ambarella